[ad_1]

Getty Images

Getty ImagesIt’s the perennial “cocktail party problem” – standing in a room full of people, drink in hand, trying to hear what your fellow guest is saying.

In fact, human beings are remarkably adept at holding a conversation with one person while filtering out competing voices.

However, perhaps surprisingly, it’s a skill that technology has until recently been unable to replicate.

And that matters when it comes to using audio evidence in court cases. Voices in the background can make it hard to be certain who’s speaking and what’s being said, potentially making recordings useless.

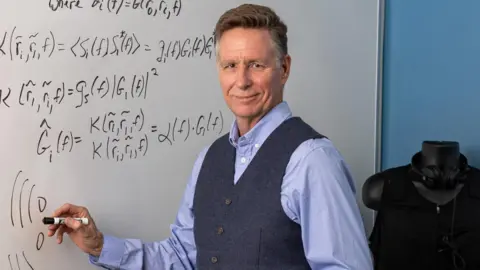

Electrical engineer Keith McElveen, founder and chief technology officer of Wave Sciences, became interested in the problem when he was working for the US government on a war crimes case.

“What we were trying to figure out was who ordered the massacre of civilians. Some of the evidence included recordings with a bunch of voices all talking at once – and that’s when I learned what the “cocktail party problem” was,” he says.

“I had been successful in removing noise like automobile sounds or air conditioners or fans from speech, but when I started trying to remove speech from speech, it turned out not only to be a very difficult problem, it was one of the classic hard problems in acoustics.

“Sounds are bouncing round a room, and it is mathematically horrible to solve.”

Paul Cheney

Paul CheneyThe answer, he says, was to use AI to try to pinpoint and screen out all competing sounds based on where they originally came from in a room.

This doesn’t just mean other people who may be speaking – there’s also a significant amount of interference from the way sounds are reflected around a room, with the target speaker’s voice being heard both directly and indirectly.

In a perfect anechoic chamber – one totally free from echoes – one microphone per speaker would be enough to pick up what everyone was saying; but in a real room, the problem requires a microphone for every reflected sound too.

Mr McElveen founded Wave Sciences in 2009, hoping to develop a technology which could separate overlapping voices. Initially the firm used large numbers of microphones in what’s known as array beamforming.

However, feedback from potential commercial partners was that the system required too many microphones for the cost involved to give good results in many situations – and wouldn’t perform at all in many others.

“The common refrain was that if we could come up with a solution that addressed those concerns, they’d be very interested,” says Mr McElveen.

And, he adds: “We knew there had to be a solution, because you can do it with just two ears.”

The company finally solved the problem after 10 years of internally funded research and filed a patent application in September 2019.

Keith McElveen

Keith McElveenWhat they had come up with was an AI that can analyse how sound bounces around a room before reaching the microphone or ear.

“We catch the sound as it arrives at each microphone, backtrack to figure out where it came from, and then, in essence, we suppress any sound that couldn’t have come from where the person is sitting,” says Mr McElveen.

The effect is comparable in certain respects to when a camera focusses on one subject and blurs out the foreground and background.

“The results don’t sound crystal clear when you can only use a very noisy recording to learn from, but they’re still stunning.”

The technology had its first real-world forensic use in a US murder case, where the evidence it was able to provide proved central to the convictions.

After two hitmen were arrested for killing a man, the FBI wanted to prove that they’d been hired by a family going through a child custody dispute. The FBI arranged to trick the family into believing that they were being blackmailed for their involvement – and then sat back to see the reaction.

While texts and phone calls were reasonably easy for the FBI to access, in-person meetings in two restaurants were a different matter. But the court authorised the use of Wave Sciences’ algorithm, meaning that the audio went from being inadmissible to a pivotal piece of evidence.

Since then, other government laboratories, including in the UK, have put it through a battery of tests. The company is now marketing the technology to the US military, which has used it to analyse sonar signals.

It could also have applications in hostage negotiations and suicide scenarios, says Mr McElveen, to make sure both sides of a conversation can be heard – not just the negotiator with a megaphone.

Late last year, the company released a software application using its learning algorithm for use by government labs performing audio forensics and acoustic analysis.

Getty Images

Getty ImagesEventually it aims to introduce tailored versions of its product for use in audio recording kit, voice interfaces for cars, smart speakers, augmented and virtual reality, sonar and hearing aid devices.

So, for example, if you speak to your car or smart speaker it wouldn’t matter if there was a lot of noise going on around you, the device would still be able to make out what you were saying.

AI is already being used in other areas of forensics too, according to forensic educator Terri Armenta of the Forensic Science Academy.

“ML [machine learning] models analyse voice patterns to determine the identity of speakers, a process particularly useful in criminal investigations where voice evidence needs to be authenticated,” she says.

“Additionally, AI tools can detect manipulations or alterations in audio recordings, ensuring the integrity of evidence presented in court.”

And AI has also been making its way into other aspects of audio analysis too.

Bosch

BoschBosch has a technology called SoundSee, that uses audio signal processing algorithms to analyse, for instance, a motor’s sound to predict a malfunction before it happens.

“Traditional audio signal processing capabilities lack the ability to understand sound the way we humans do,” says Dr Samarjit Das, director of research and technology at Bosch USA.

“Audio AI enables deeper understanding and semantic interpretation of the sound of things around us better than ever before – for example, environmental sounds or sound cues emanating from machines.”

More recent tests of the Wave Sciences algorithm have shown that, even with just two microphones, the technology can perform as well as the human ear – better, when more microphones are added.

And they also revealed something else.

“The math in all our tests shows remarkable similarities with human hearing. There’s little oddities about what our algorithm can do, and how accurately it can do it, that are astonishingly similar to some of the oddities that exist in human hearing,” says McElveen.

“We suspect that the human brain may be using the same math – that in solving the cocktail party problem, we may have stumbled upon what’s really happening in the brain.”

[ad_2]

Source link

Leave a Reply